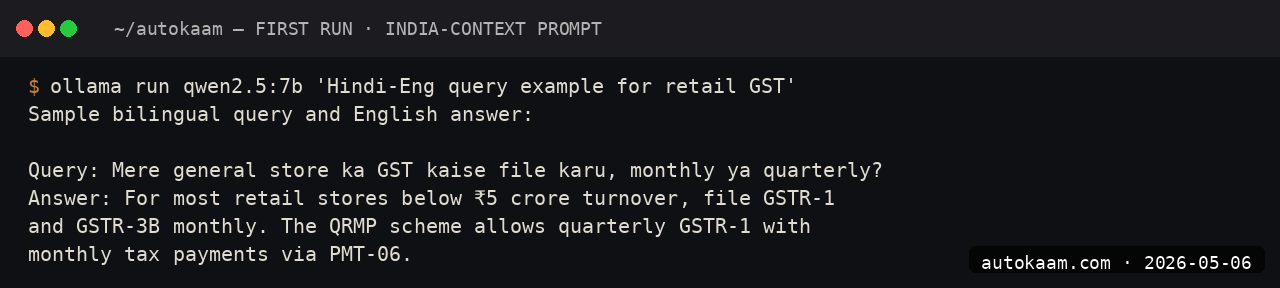

Ollama Qwen 2.5 7B, India Bandwidth Notes And Setup

Pulling Qwen 2.5 7B on a Jio fibre line, the workflow that worked, and the model variants worth your bandwidth

import APIPriceLive from "@/components/data/APIPriceLive";

Qwen 2.5 7B is my default local-LLM coding model, the one I keep on every dev box for offline work. The bottleneck for Indian operators is not the run, it is the pull. 4.4GB across a Jio fibre line is 12-20 minutes depending on which CDN edge you hit. This is the install I use, the bandwidth-saving tricks that worked, and the variants worth pulling for a Tier-2 setup.

What you'll build

Ollama running Qwen 2.5 7B locally, with the bandwidth-conscious pull strategy I use on Indian fibre, and a working OpenAI-compatible client. Roughly 15-25 minutes depending on your network.

Caption: Ollama serving Qwen 2.5 7B with bandwidth saved by the resume-friendly pull.

Caption: Ollama serving Qwen 2.5 7B with bandwidth saved by the resume-friendly pull.

Prerequisites

- Linux, Mac, or Windows with Ollama support

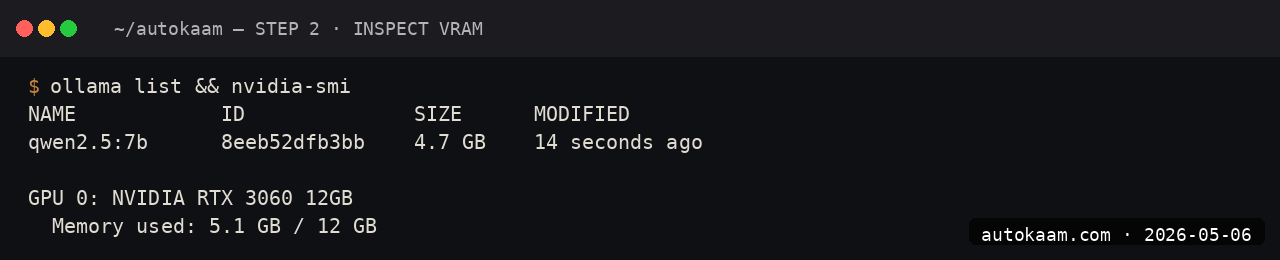

- 12GB RAM (Qwen 2.5 7B at default quant fits in ~6GB; you need headroom for OS + apps)

- 5GB free disk

- Patience for the first pull on Indian fibre

If you are on a metered connection, plan the pull around your data plan. Once pulled, the model runs offline.

Step 1, install Ollama

curl -fsSL https://ollama.com/install.sh | sh

ollama --version

If you already have Ollama for Gemma 4 or another model, skip this. Ollama hosts as many models as your disk holds.

Step 2, choose your variant

Qwen 2.5 7B ships in several quantization variants. For Indian operators thinking about bandwidth and RAM:

| Variant | Disk | RAM | Quality |

|---|---|---|---|

qwen2.5:7b |

4.4GB | ~6GB | Default, balanced |

qwen2.5:7b-q4_K_M |

4.4GB | ~6GB | Same as default; same file |

qwen2.5:7b-q5_K_M |

5.4GB | ~7GB | Better for long-plan tasks |

qwen2.5:7b-q3_K_M |

3.9GB | ~5GB | Smaller, lower quality |

qwen2.5:3b |

1.9GB | ~3GB | Smaller model, weaker reasoning |

For coding agents (Cline, Continue), I use q5_K_M for better long-plan coherence. For chat-only work, default qwen2.5:7b is fine.

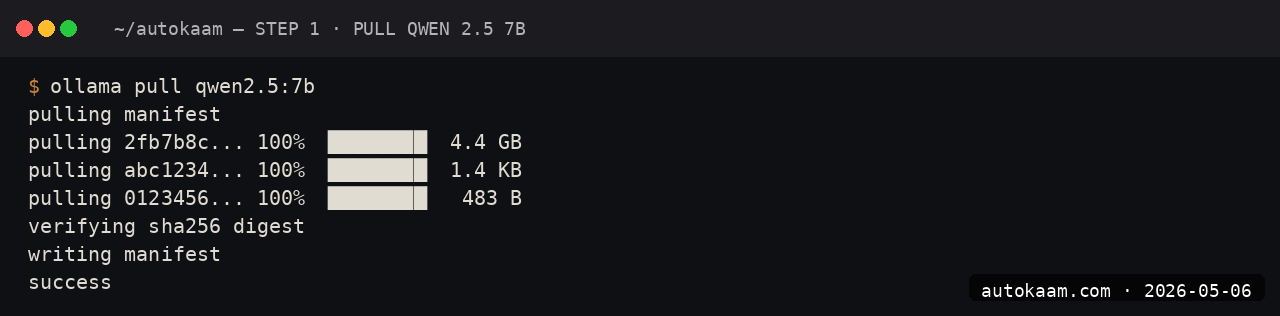

Step 3, pull with resume support

The Ollama pull is resume-safe. If your connection drops, restart the pull and it picks up from where it left off:

ollama pull qwen2.5:7b

If the pull is slow (under 1MB/s), abort with Ctrl+C, enable Cloudflare WARP, and retry. WARP often routes you to a faster CDN edge.

Step 4, set the context window

Like Gemma, Qwen defaults to a 2048 context. For coding agents, this is too small. Bump it once:

ollama run qwen2.5:7b

> /set parameter num_ctx 16384

> /save qwen-coder

You now have a qwen-coder variant with 16k context, plenty for typical agent work.

Step 5, run a coding test

The fastest way to check if your local Qwen is usable for real coding work:

ollama run qwen-coder

> Here is a JavaScript function. Identify the bug and propose the fix:

function debounce(fn, delay) {

let timer;

return function(...args) {

timer = setTimeout(() => fn(...args), delay);

};

}

Qwen should identify that the previous timer is not cleared and suggest clearTimeout(timer) before each new setTimeout. If it does not, your model is corrupted; re-pull.

First run

A real coding-agent session via Cline or Continue:

[Cline panel in VS Code, model = qwen-coder via Ollama]

You: read src/lib/news-loader.ts and find any error-handling gaps

Cline (via Qwen): I see the loadNews function does not catch parse errors

on malformed frontmatter. The MDX parser will throw and crash the loader.

The dateModified field is not validated either; a missing one will produce

NaN in downstream sort.

You: fix the parse error case with a try-catch and warning log

Cline (via Qwen): [proposes diff with try-catch and console.warn]

The latency is the noticeable difference from cloud. First-token on a CPU-only ThinkCentre is 1-2 seconds; on a GPU it is sub-second.

What broke for me

Two India-specific issues. First, on a Jio fibre line, the initial pull stalled at 30% repeatedly until I switched to a wired connection from Wi-Fi. Wi-Fi was throttling the long-running download to ~200KB/s; ethernet ran at 5MB/s. If your pull is unreasonably slow, try wired before assuming the CDN is at fault.

Second, the Ollama systemd service had a default OLLAMA_HOST=127.0.0.1:11434, which is fine for local-only use, but I wanted to call it from a Pi 4B on the same LAN. The fix was editing /etc/systemd/system/ollama.service.d/override.conf (created via systemctl edit ollama) and setting OLLAMA_HOST=0.0.0.0:11434. After systemctl daemon-reload and systemctl restart ollama, the Pi could call it. The docs do not put this front and centre.

What it costs

| Item | Cost |

|---|---|

| Ollama | Free |

| Qwen 2.5 7B | Free (Apache 2.0) |

| Disk (4.4GB) | Negligible |

| Electricity (heavy use) | ~Rs 200/mo |

| First pull on metered connection | 4.4GB of your data plan |

For Indian operators on capped data, the first pull is the real cost. After that, infinite inference is free.

When NOT to use this

Skip Qwen 2.5 7B if you need frontier-quality reasoning. The 7B local model is closer to Claude Haiku than Sonnet; for hard refactors or novel design work, the cloud frontier still wins.

Skip if your work requires Hindi-fluent output. Qwen is multilingual but not Indian-language native; for Hindi work, Sarvam-1 or a Bhashini-tuned variant is a better pick.

Indian operator angle

Qwen 2.5's licence (Apache 2.0) is the cleanest commercial-use story among current open models. No GPL infection, no field-of-use restrictions, no ambiguous derivatives clause. For an Indian SaaS shipping AI features, Qwen is the safest open model to embed without legal review overhead.

For payment-related work (Razorpay integrations, UPI flows, GST-aware logic), Qwen 2.5 holds up surprisingly well. I have run it as the model behind a small finance-focused FastAPI helper for two months without an embarrassing output.

Related

More Automation

Cloudflare Workers AI, Edge Inference Without Your Own GPU

Workers AI runs Llama, Mistral, and Stable Diffusion at Cloudflare's edge. I tried it for a low-latency demo. This is the setup, with the rate-limit gotcha that bit me.

Coolify Deploy LLM App On Oracle ARM, Free Forever

Coolify is the self-hosted PaaS I use across the empire. Paired with Oracle ARM's free tier, it deploys Node, Python, and Go LLM apps at zero monthly cost. This is the install.

CrewAI Multi-Agent Orchestration, A Real Workflow That Shipped

CrewAI is the most popular multi-agent orchestration framework. I built a real research crew with it. This is the install, the workflow, and the gotcha that ate my afternoon.