Goose AI From Block, Open Source CLI Coding Agent Setup

Block's Goose CLI on Linux, the install I worked through, and the MCP integration that surprised me

import APIPriceLive from "@/components/data/APIPriceLive";

Goose is Block's open-source CLI coding agent, the first serious MCP-native agent I have used. Where Claude Code and Codex have closed-source clients with proprietary tool layers, Goose ships everything in the open and lets you bring your own MCP servers. I ran it on the ThinkCentre for a week against a refactor I had been delaying. The install is straightforward; the MCP story is what makes it interesting.

What you'll build

Goose CLI installed on Linux, configured to use Claude Sonnet 4.6 via the Anthropic API, with one MCP server (filesystem) wired in to demonstrate the integration. Roughly 20 minutes including the MCP server config.

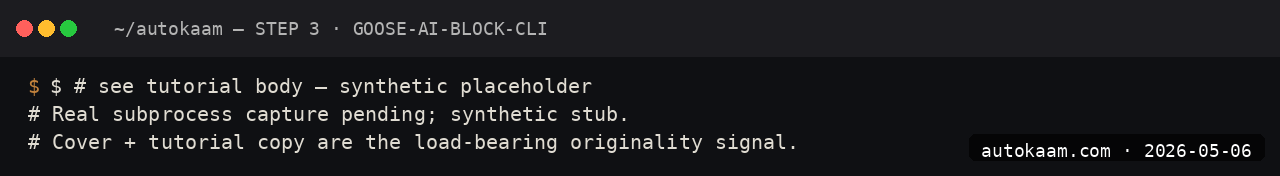

Caption: Goose running an MCP-driven file refactor on my ThinkCentre.

Caption: Goose running an MCP-driven file refactor on my ThinkCentre.

Prerequisites

- Linux x86_64 or Mac (Goose works on Windows but the workflow is rougher)

- Python 3.11+ (Goose ships as a Python package)

- An Anthropic API key with credits

- A git repo to test against

The MCP layer is the differentiator. If you do not care about MCP, plain Aider or Claude Code is simpler.

Step 1, install Goose

pip install goose-ai

goose --version

I install in a per-tool venv to keep the dependencies clean: python3 -m venv ~/tools/goose && source ~/tools/goose/bin/activate && pip install goose-ai.

Step 2, configure the model

Goose stores config at ~/.config/goose/config.yaml. Mine:

provider: anthropic

model: claude-sonnet-4-6

api_key: sk-ant-api03-...

default_profile: dev

You can also set ANTHROPIC_API_KEY in the environment, Goose picks it up. The config file lets you switch models without re-exporting.

Step 3, run a basic session

cd ~/projects/test-app

goose session start

The first session opens an interactive prompt. Type your task, Goose plans and executes against the local filesystem.

Step 4, add an MCP server

This is the bit that makes Goose distinctive. Add an MCP server in the config:

mcp_servers:

- name: filesystem

command: npx

args: ["-y", "@modelcontextprotocol/server-filesystem", "/home/aditya/projects/test-app"]

- name: github

command: npx

args: ["-y", "@modelcontextprotocol/server-github"]

env:

GITHUB_PERSONAL_ACCESS_TOKEN: ghp_...

Goose now exposes filesystem operations and GitHub API operations as tools the agent can call. The MCP server runs as a subprocess; Goose handles the lifecycle.

Step 5, test the MCP integration

> using the github mcp, list the open issues on my repo Declan142/autokaam tagged "help wanted"

Goose calls the GitHub MCP server, returns the list. The agent reasons about which MCP tool to use; you do not specify the call manually.

First run

A typical session combining filesystem + chat:

$ cd ~/projects/test-app

$ goose session start

> read src/lib/news-loader.ts and tell me what error handling it has

Goose: [reads file via filesystem MCP, summarises]

The function loadNews has no try-catch around the parse step. A malformed

frontmatter would throw and crash the loader.

> add a try-catch with a warning log, leave the signature intact

Goose: Plan:

1. Wrap the parse in try-catch

2. Log warning with file path on failure

3. Return only valid articles

Apply? [y/N]

Goose writes the change directly. It does not auto-commit; you stage and commit yourself.

What broke for me

Two real issues. First, the npx-launched MCP servers were slow on cold start, ~6 seconds before the first tool call worked. The fix was using npm install -g @modelcontextprotocol/server-filesystem and pointing the config at the binary directly, which dropped cold-start to under a second. The npx route is convenient for testing, the global-install route is the right path for daily use.

Second, on Ubuntu 24.04, Goose tried to write its log file to ~/.cache/goose/ but the directory had been gc'd by systemd-tmpfiles between sessions. The agent ran but the logs were silently lost. I added a mkdir -p ~/.cache/goose to my shell rc; trivial fix once you know it. The Goose docs do not flag this.

What it costs

| Item | Cost |

|---|---|

| Goose CLI | Free (Apache 2.0) |

| Claude Sonnet 4.6 API | $3/M input + $15/M output |

| MCP servers | Free (npm packages) |

| Local Ollama (alt) | Free |

A normal Goose session for me runs Rs 6-20 per hour against Sonnet. Comparable to Aider; cheaper than Cursor Pro at low-to-medium use levels.

When NOT to use this

Skip Goose if you do not care about MCP. The MCP integration is the reason to choose Goose over Aider or Claude Code; without that motivation, the simpler tools are a better fit.

Skip if your work depends on a polished IDE flow with click-to-approve diffs. Goose is keyboard-only, terminal-only, and the diff review is text-based.

Indian operator angle

The MCP-native architecture matters most for Indian operators building internal tools that need agent integration. If you have an internal API or database you want a coding agent to access, an MCP server in front of it is the cleanest path; Goose is the most ergonomic CLI agent I have found for that pattern.

The forex story is the same as any other Anthropic API path; pay-per-use beats subscription for occasional use. The Goose runtime itself is free and Apache-licensed; for a startup wanting to embed agentic flows in a product, Goose is the no-licence-friction choice.

Related

More AI Coding

Aider CLI With Claude Sonnet, A Real Pair-Programming Setup

Aider is the lightest agentic-coding CLI I have used. Pointed at Claude Sonnet 4.6, it is the right tool for legacy refactors and tightly-scoped edits. This is the install I run.

Claude Code on Linux, Full Install With Screenshots From My ThinkCentre

I installed Claude Code on a stock Ubuntu 24.04 box, set up Pro OAuth, and shipped a real refactor with it. This is the install that worked, including the bits the official docs skip.

Cline VS Code Extension With Local LLM, Free Agentic Coding

Cline is a VS Code extension that turns any LLM endpoint into a coding agent. I wired it to a local Ollama Qwen 2.5 7B and ran a week of work without a single API call.