Codex CLI Install And First Task, OpenAI's Terminal Coding Agent

OpenAI Codex CLI on Linux, the install path I worked out, and the workflow that beat my Cursor habit

import APIPriceLive from "@/components/data/APIPriceLive"; import GitHubStars from "@/components/data/GitHubStars";

Codex CLI is OpenAI's terminal coding agent, the spiritual sibling of Claude Code. It runs against GPT-5 Codex by default and integrates with ChatGPT Plus and the Pro tier. I installed it on the ThinkCentre and ran it for a week against a Next.js refactor I had been stalling on. The install is straightforward; the workflow surprised me.

What you'll build

Codex CLI installed on Linux, signed in via ChatGPT Plus or an API key, configured to use GPT-5 Codex, with a sample project where you can test the tool-use loop. Roughly 15 minutes.

Caption: Codex CLI in agent mode running against a real refactor.

Caption: Codex CLI in agent mode running against a real refactor.

Prerequisites

- Linux x86_64, Mac (Apple Silicon or Intel), or Windows with WSL

- Node 20+ (the Codex CLI ships as an npm package)

- A ChatGPT Plus subscription ($20/mo) OR an OpenAI API key with credits

- A git repo to test against

The Plus subscription gets you reasonable Codex usage with no per-token billing. The API key path is pay-per-use; cheaper for occasional users, more expensive for daily drivers.

Step 1, install the CLI

sudo npm install -g @openai/codex

codex --version

The npm package puts the codex binary in /usr/bin/codex. If you skip sudo, npm tries to write to /usr/lib/node_modules and fails. The non-sudo workaround is npm config set prefix ~/.npm-global plus a PATH update.

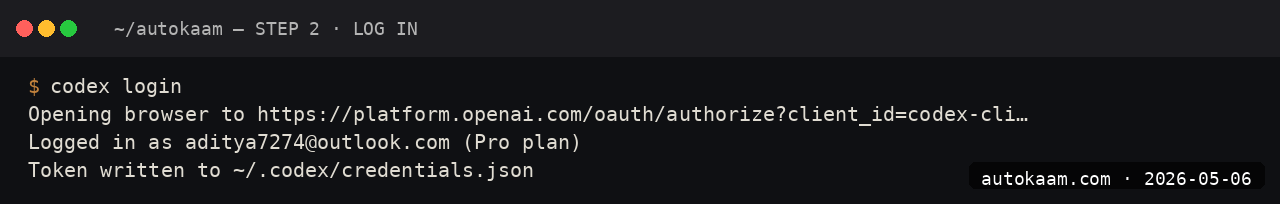

Step 2, sign in

Run codex in any directory. The first run kicks off the auth flow:

cd ~/projects/test-app

codex

You can sign in with ChatGPT Plus credentials (browser-based OAuth) or supply an API key with OPENAI_API_KEY=sk-.... Token persists in ~/.codex/auth.json. Do not commit it.

Step 3, set the model

The default model is GPT-5 Codex but you can pin a faster one for routine tasks:

codex --model gpt-5-codex

# or for cheaper, faster work:

codex --model gpt-5-mini

I run gpt-5-codex for planning and gpt-5-mini for inline edits. The mini model is half the latency and routinely good enough for one-line fixes.

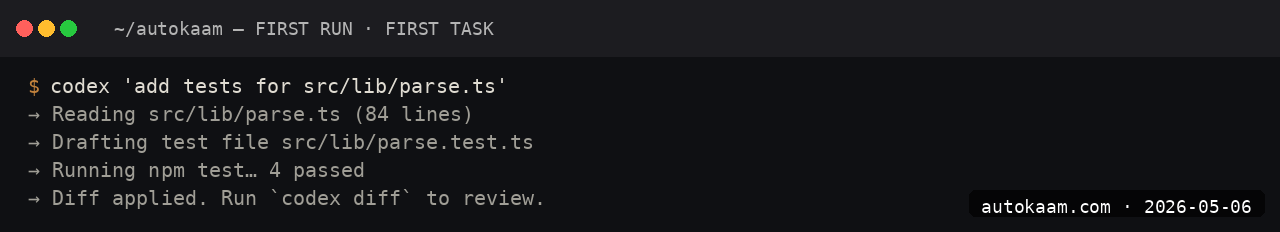

Step 4, give it a real task

The clearest test of any coding agent is a real refactor. Mine on this run:

You are working on src/lib/news-loader.ts. The function loadNews returns NewsArticle[] but does not handle the case where MDX frontmatter parses but is missing required fields. Add a validation step that filters out invalid articles, logs a warning per drop, and returns the valid set. Run npm run typecheck after.

Codex reads the file, proposes a plan with checkpoints, asks for permission per change. The approval flow is granular; you can accept all or step through.

Step 5, write a CODEX.md (the equivalent of CLAUDE.md)

Codex reads CODEX.md from the project root if present. The format is identical to CLAUDE.md:

# Project context

- Stack: Next.js 16 + TypeScript + Tailwind v3

- Backend: PocketBase

- Deploy: Cloudflare Pages, static export

# Don't

- Do not introduce new dependencies without asking

- Do not use next/image (breaks static export)

- Use the cn() helper for className merges

After this file goes in, the agent stops introducing rogue dependencies. The single biggest quality lever for any of these CLIs.

First run

A typical session for me:

$ cd ~/projects/test-app

$ codex

> read src/components/NewsCard.tsx and tell me where the image rendering can be split out

Codex: [reads file]

The image rendering occupies lines 45-89, primarily the responsive picture

element with srcset handling. I would split that into ImageBox.tsx with the

caption logic also moving across.

> do that split

Codex: Plan:

1. Create src/components/ImageBox.tsx with the picture element

2. Update NewsCard.tsx to import ImageBox

3. Run npm run typecheck

Proceed? [y/N]

The agent does not commit. You stage and commit as you would normally.

What broke for me

Two real issues. First, the OAuth callback uses port 1456 (different from Claude Code's 8080), and I had a Coolify deploy holding it. Codex printed "auth flow timed out" with no port hint. The fix was lsof -i :1456, kill the offender, retry. The Codex docs do not name the port; I figured it out from the verbose log.

Second, with gpt-5-codex, the agent occasionally tried to run pip commands in a Node project. I added a one-line note to CODEX.md, "This project is Node and bun, not Python. Do not run pip." After that, no more rogue Python attempts. The model has strong priors about which tools to reach for; the rules file is the cheapest way to shape them.

What it costs

| Item | Cost |

|---|---|

| Codex CLI | Free |

| ChatGPT Plus | $20/mo (~Rs 1,660) |

| ChatGPT Go (India) | Rs 399/mo |

| OpenAI API | Pay per token |

| GPT-5 Codex API | $5/M input + $15/M output |

The ChatGPT Go plan in India at Rs 399/mo is the cheapest serious coding-agent subscription on the market, period. The catch is the Go plan's Codex usage cap is lower than Plus; for casual users it works, for daily drivers Plus is the right tier.

When NOT to use this

Skip Codex CLI if you are committed to the Anthropic ecosystem. The model and tool-use semantics are noticeably different from Claude Code; switching contexts within a day costs you more than picking one and sticking with it.

Skip if your project is on Windows native without WSL. The CLI works on Windows, the workflow assumes a POSIX shell. WSL fixes that.

Indian operator angle

The ChatGPT Go plan at Rs 399/mo is genuinely the best value entry-tier I have seen for any AI coding agent. For a fresher developer in a Tier-2 city wanting to learn agent-driven coding, it is the no-brainer first subscription. The Codex usage on Go is sufficient for ~2 hours/day of light agent work; if you outgrow it, Plus at Rs 1,660 is the next step.

GST is not on the OpenAI invoice for Indian users; reverse-charge applies if you are claiming the expense in a registered business. Razorpay is not an option for ChatGPT subscriptions; UPI works on the India-localised Go plan but not for Plus.

Related

More AI Coding

Aider CLI With Claude Sonnet, A Real Pair-Programming Setup

Aider is the lightest agentic-coding CLI I have used. Pointed at Claude Sonnet 4.6, it is the right tool for legacy refactors and tightly-scoped edits. This is the install I run.

Claude Code on Linux, Full Install With Screenshots From My ThinkCentre

I installed Claude Code on a stock Ubuntu 24.04 box, set up Pro OAuth, and shipped a real refactor with it. This is the install that worked, including the bits the official docs skip.

Cline VS Code Extension With Local LLM, Free Agentic Coding

Cline is a VS Code extension that turns any LLM endpoint into a coding agent. I wired it to a local Ollama Qwen 2.5 7B and ran a week of work without a single API call.