Anthropic Locks Canberra, Crowds Out OpenAI in Asia-Pacific

The MOU is the cheap part. The Sydney office, the AUD$3M science credits, and a future data-center spend are the real fence going up around the region.

Australia's investment in AI safety makes it a natural partner for responsible AI development. This MOU gives our collaboration a formal foundation.

- Anthropic now has safety-institute MOUs in the US, UK, Japan, and Australia. That is a perimeter, not a press release.

- AUD$3M in API credits to four Australian research institutes is cheap customer acquisition for a sovereign-AI procurement cycle.

- A Sydney office plus exploratory data-center spend tells you where the next ANZ enterprise RFP defaults will land.

- If you sell into ANZ public sector, assume Claude is now the incumbent reference model in any 2026 RFP you respond to.

The frontier-model category is consolidating along government lines, and this week's announcement out of Canberra confirms the shape. Anthropic signed an MOU with the Australian government, dropped AUD$3 million in Claude API credits across four research institutes, and confirmed a Sydney office is coming. Read it as a single move, not three. The vendor that lines up safety-institute partnerships, sovereign science deployments, and a local enterprise team in the same week is not announcing a partnership. It is staking a perimeter.

The Deployment

The signed document is a Memorandum of Understanding between Anthropic and the Australian government, formalised when Dario Amodei met Prime Minister Anthony Albanese in Canberra. The MOU commits Anthropic to work with Australia's AI Safety Institute on joint safety and security evaluations, share findings on emerging model capabilities and risks, and feed Anthropic Economic Index data to the Australian government to track AI adoption across the workforce. The company says it will initially focus on natural resources, agriculture, healthcare, and financial services.

Alongside the MOU, Anthropic announced AUD$3 million in Claude API credits going to four Australian institutions through its AI for Science program: the Australian National University, Murdoch Children's Research Institute, the Garvan Institute of Medical Research, and Curtin University. The named workloads are concrete. ANU's John Curtin School of Medical Research is using Claude to analyse genetic sequencing data for rare diseases, with the ANU School of Computing embedding Claude into computing courses. Garvan, working with UNSW and the Centre for Population Genomics, is targeting two genomic projects, including automation of the genetic analysis bottleneck in diagnosing children with rare genetic conditions. Murdoch Children's Research Institute is also applying Claude to its stem cell medicine program for childhood heart disease. Curtin's data science institute is using Claude across health sciences, humanities, business, law, science, and engineering research.

A separate program announced on the same trip offers up to USD$50,000 (about AUD$72,000) in Claude API credits to VC-backed deep-tech startups working on drug discovery, materials science, climate modeling, and medical diagnostics. Anthropic also flagged it is exploring investments in data center infrastructure and energy in Australia, aligned with the government's recently announced data center expectations. A Sydney office is in setup, with local team and leadership announcements promised in the coming weeks.

Why It Matters

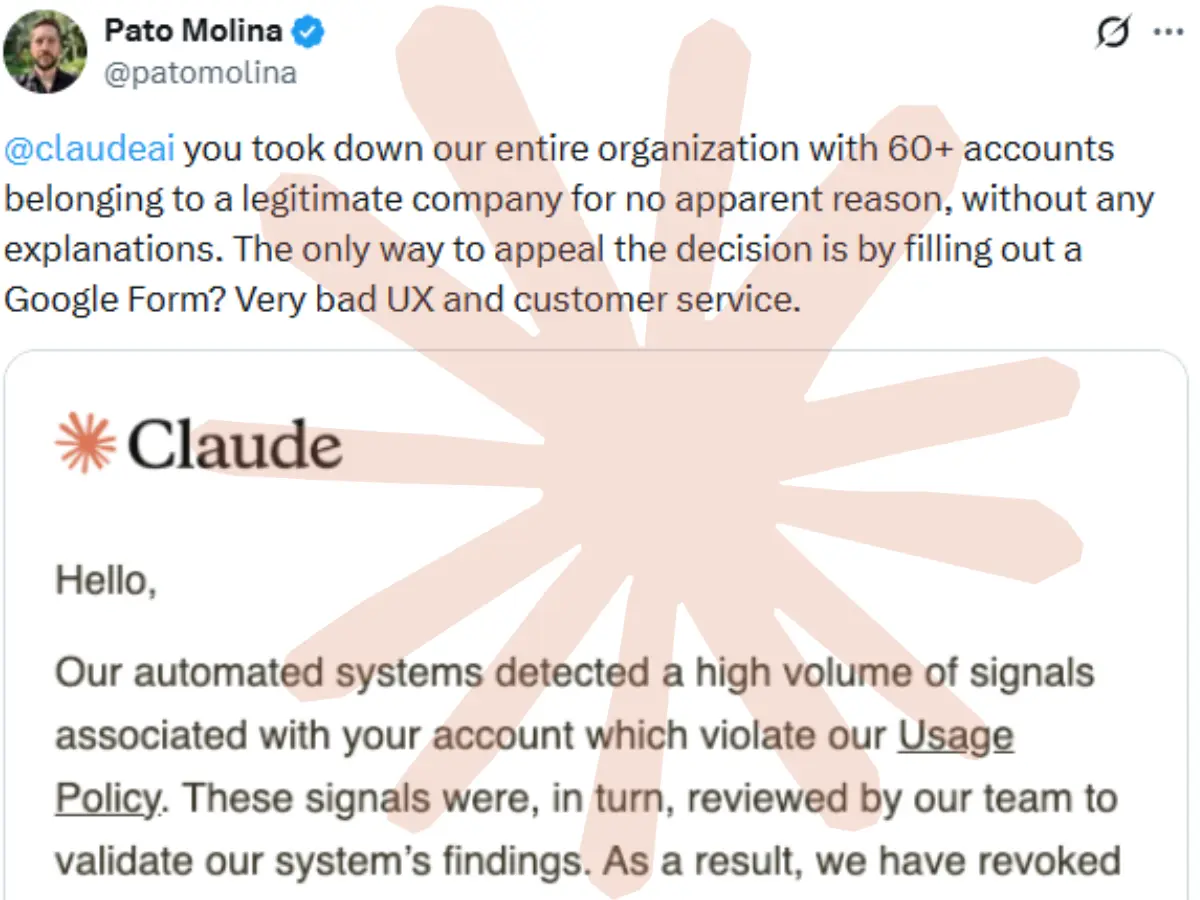

The single most important sentence in the announcement is the one that almost reads as a footnote. Anthropic now has formal safety-institute arrangements with the US, UK, Japan, and Australia. That is a four-government perimeter built across the Five Eyes plus Japan in roughly eighteen months. The structural read is straightforward: when allied governments harmonise their AI safety evaluation regimes, the vendor that is already inside the room writing the evaluation methodology becomes the default reference model for any sovereign procurement that downstream agencies run. OpenAI has its own arrangements, but it does not yet have this exact set, in this exact configuration, with this exact cadence. Anthropic is moving faster on the policy flank than on the product flank, and the gap is starting to look deliberate.

The economics underneath the science investments are equally telling. AUD$3 million in Claude API credits across four institutions is, in raw cost-of-goods terms, a rounding error against Anthropic's compute spend. What it buys is reference customers inside the institutions that will be cited in every Australian government health-AI, genomics, and computing-curriculum tender for the next three to five years. ANU is not a customer. ANU is a brochure. Garvan is not a customer. Garvan is a case study with peer-reviewed output. The unit economics of buying a sovereign reference customer with credits, rather than cash, is one of the most attractive customer-acquisition motions in the category right now, and Anthropic is running it harder than anyone else.

The vendor pattern this echoes most directly is the cloud-hyperscaler land grab of 2014 to 2018. AWS, Azure, and GCP did not win their sovereign footholds by selling into procurement. They won by seeding research institutes, university programs, and government innovation labs with credits, then watching the buyer-side evaluation teams cycle through those institutions and arrive at procurement having already standardised on the incumbent. Anthropic is running that exact playbook in 2026, on a five-year cycle, against a competitive set of OpenAI, Google DeepMind, and Mistral. The cost of running the play is one Sydney office, one MOU, and AUD$3M in credits. The prize is the default-vendor slot in every Australian Commonwealth and state-level AI evaluation that runs between now and the end of the decade.

The data-center exploration is the deepest tell. Anthropic does not announce an exploratory data-center investment in a country where it has not already modelled five-year demand. The Sydney office is the demand-capture surface. The data-center spend is the supply-side commitment that says the demand model is real enough to write a power-purchase agreement against. Australia gets a sovereign compute story; Anthropic gets a captive market with switching costs that will be measured in years.

What Other Businesses Can Learn

If you are an operator selling AI-adjacent product into the ANZ market, into Australian public sector, or into the research and innovation ecosystem that the four named institutes anchor, the practical takeaways are immediate.

First, assume Claude is now the incumbent reference model in any Australian Commonwealth, state, or research-institute RFP that lands on your desk between now and the end of 2027. The buyer's evaluation team has touched it. The buyer's research collaborators have published with it. The buyer's safety institute has joint evaluation findings on it. Your own model selection, your reference architectures, and your case-study language need to acknowledge that gravitational pull. Pitching against Claude in Australia is now a different exercise than pitching against Claude in, say, France.

Second, watch the Anthropic Economic Index integration. Anthropic is feeding adoption data to the Australian government across natural resources, agriculture, healthcare, and financial services. If you sell vertical software into any of those four sectors, the buyer is about to have a much sharper view of where their workforce is using AI, what tasks are getting offloaded, and where the productivity story is real. That changes the question your buyer asks you in the next renewal conversation. It moves from "does your product use AI" to "where does your product sit in the workflow distribution we are now measuring centrally."

Third, the deep-tech credit program has a budget cap of about AUD$72,000 per startup. That is enough to run a meaningful Claude workload for six to nine months for a small drug-discovery or climate-modeling team. If you are a VC-backed Australian deep-tech founder and you have not already submitted, you are leaving free runway on the table.

Fourth, the Sydney office and the data-center exploration tell you where the enterprise commercial team will be heavily incentivised in the back half of 2026. Expect aggressive ANZ-region discounting on enterprise contracts, expect Claude-tuned reference architectures from local consulting partners to multiply, and expect at least one Big Four advisory firm in Sydney to publicly announce a Claude-anchored AI practice within ninety days.

The vendor that lines up safety-institute partnerships, sovereign science deployments, and a local enterprise team in the same week is not announcing a partnership. It is staking a perimeter.

Looking Ahead

In the next twelve to eighteen months, expect Anthropic to close the same shape of MOU with at least one more allied government, Canada and New Zealand are the obvious candidates, with Singapore the dark horse. Watch the ANU and Garvan publication output for citations of Claude as the analytical substrate; the first peer-reviewed clinical genomics paper that names the model becomes the artifact that every other ANZ research institute references when they negotiate their own credit allocation. The named comparable to watch is the OpenAI–UK AI Safety Institute relationship: if OpenAI moves to formalise a similar Australian arrangement before Q4, the perimeter Anthropic just built becomes contested, and the discount math on every ANZ enterprise contract changes inside a quarter.

Sources

- Australian government and Anthropic sign MOU for AI safety and research, Anthropic, accessed 2026-04-27

More from the same beat.

Whitehall Splits 10x on AI Datacentre Power, Bleeds UK Net Zero

DSIT counts 6GW of AI compute by 2030. DESNZ counts a tenth of that. Both numbers can't be right, and one of them is the carbon budget.

- DSIT says 6GW of AI compute by 2030. DESNZ models a 528MW growth for the entire commercial services sector. Pick one.

$750M Dell Hospital Torches Legacy AI

The real signal for operators: When AI is in the foundation, not bolted on, scale changes everything.

- This is AI as foundational infrastructure, not a tool layered on legacy systems — redefining workflows from day one in a greenfield build.

EirGrid Guts Dublin AI Build, Hyperscalers Lock 2028

The country hosts a quarter of EU hyperscale capacity, but US startups now eye Mullingar and Cork after Microsoft, Google, and AWS locked in years ahead.

- The Dublin data-center window is shut until 2028. No amount of venture backing will change that.